· GDPR & Compliance · 11 min read

AI Agents in Healthcare: GDPR Risks Every Practice Owner Must Understand

The MIT AI Agent Index 2025 analyzed 30 AI agents and found rapid autonomy growth with weak safety controls. Here is what that means for GDPR compliance when your practice deploys AI automation.

Key Takeaways

- The MIT AI Agent Index 2025 analyzed 30 AI agents and found autonomy is increasing faster than safety oversight across all sectors, including healthcare.

- AI agents processing patient records trigger GDPR Article 9 (special category data) and very likely require a mandatory Data Protection Impact Assessment under Article 35.

- Autonomous AI decision-making in clinical workflows activates Article 22 protections — patients have the right not to be subject to solely automated decisions with significant effects.

- The EU AI Act classifies AI systems used in healthcare as high-risk under Annex III, adding a compliance layer on top of GDPR that most independent practices are not yet prepared for.

- Insecure tailored AI automation — where agents access data beyond their defined scope, operate without audit logs, or rely on undocumented subprocessors — creates direct GDPR exposure for the practice owner.

The MIT AI Agent Index 2025 documented something that regulators and compliance professionals have been warning about for years: AI agents are gaining autonomy far faster than the governance frameworks designed to contain them. For healthcare practices in the EU, this is not an abstract technology story — it is a live GDPR compliance risk operating inside your patient data environment right now.

An AI agent, in practical terms, is software that does not simply respond to a single request. It perceives its environment, plans a sequence of actions, and executes them autonomously — often calling external tools, reading databases, and writing outputs without human review of each step. In a healthcare setting, that might mean an AI agent that schedules appointments, retrieves patient records, drafts clinical summaries, and sends communications — all as part of a single automated workflow.

What the MIT AI Agent Index 2025 Found

Researchers at MIT analyzed 30 AI agents across industries and documented three patterns that are directly relevant to healthcare GDPR compliance.

1. Autonomy Is Increasing Faster Than Oversight The agents studied in the MIT AI Agent Index 2025 were increasingly capable of multi-step, multi-tool execution without human checkpoints. In healthcare deployments, this means AI systems are making sequences of consequential decisions — accessing records, triggering communications, updating data — in ways that no single human has reviewed or approved.

2. Safety Transparency Is Weak The index found that the majority of agents studied lacked publicly documented safety mechanisms, refusal behaviors, or audit trails. For a healthcare practice, deploying an agent without documented safety controls is not a technical gap — it is a GDPR accountability failure under Article 5(2), which requires that you be able to demonstrate compliance.

3. Tool Integration Expands the Attack Surface Modern AI agents operate by calling external tools: APIs, databases, communication platforms, scheduling systems. Each tool integration is a potential subprocessor under Article 28 of GDPR, and each represents a data flow that must be mapped, assessed, and contractually governed. The MIT research found that few agent deployments maintained comprehensive documentation of their tool chains.

“The MIT AI Agent Index 2025 analyzed 30 AI agents and identified a consistent pattern: rapid capability expansion with lagging governance infrastructure.” — MIT AI Agent Index 2025

Why Healthcare Is Different: Special Category Data and Article 9

Under GDPR Article 9, health data is classified as special category data — a designation that carries the highest level of protection in European data law. Processing it requires not just a lawful basis under Article 6, but an additional explicit legal ground under Article 9(2), which in most healthcare contexts means explicit patient consent or a specific healthcare treatment purpose.

AI agents in a healthcare practice may autonomously access or process:

- Patient medical history and diagnosis records

- Medication lists and prescription data

- Appointment and treatment notes

- Medical imaging metadata

- Mental health or psychiatric records

- Referral letters containing clinical assessments

- Insurance and billing data linked to health conditions

Key GDPR exposure: An AI agent that autonomously accesses and processes health data without a documented legal basis under Article 9(2), without a completed DPIA under Article 35, and without documented human oversight triggers simultaneous violations of Articles 5, 9, 22, and 35 — in a single automated workflow.

This exposure is not hypothetical. It is the default state of most off-the-shelf AI automation tools sold to healthcare practices without compliance-first architecture. For a full breakdown of your baseline obligations, see our guide to GDPR compliance requirements for independent healthcare providers.

Mapping the MIT Findings to GDPR Obligations

Article 5(1)(f): Integrity and Confidentiality in Autonomous Systems

GDPR Article 5(1)(f) requires that personal data be processed with appropriate security, protecting against unauthorised or unlawful processing, accidental loss, destruction, or damage. For AI agents, integrity and confidentiality is not a one-time configuration — it is an ongoing operational requirement. Every tool the agent calls, every external API it connects to, and every output it generates is a potential vector for data loss or unauthorised access that must be governed, monitored, and documented.

Article 35: When AI Agents Trigger a Mandatory DPIA

AI agents that process special category health data under GDPR Article 9 almost certainly trigger a mandatory DPIA under Article 35. The GDPR requires a DPIA when processing is “likely to result in a high risk” to individuals — and autonomous AI processing of health records, combined with automated decision-making, meets this threshold in virtually all healthcare deployment scenarios. Failure to complete a DPIA before deployment is itself a GDPR violation, regardless of whether any breach subsequently occurs.

DPIA checklist for healthcare AI agent deployment:

- What personal data does the agent access?

- Does the agent process special category data (Article 9)?

- Is automated decision-making involved (Article 22)?

- What is the subprocessor chain?

- Is access scoped to minimum necessary data?

- Are agent actions logged and auditable?

Article 28: Subprocessor Mapping for AI Agent Tool Chains

Every external service an AI agent calls — an LLM API, a scheduling platform, a cloud database, a communication tool — is a potential subprocessor under Article 28. Each subprocessor relationship requires a Data Processing Agreement, and you as the data controller remain accountable for their compliance. The tool chain complexity documented in the MIT AI Agent Index 2025 means that a single AI agent deployment may involve five, ten, or more subprocessors — each of which must be identified, assessed, and contractually governed before the agent processes a single patient record.

Where Insecure Tailored AI Automation Fails in Practice

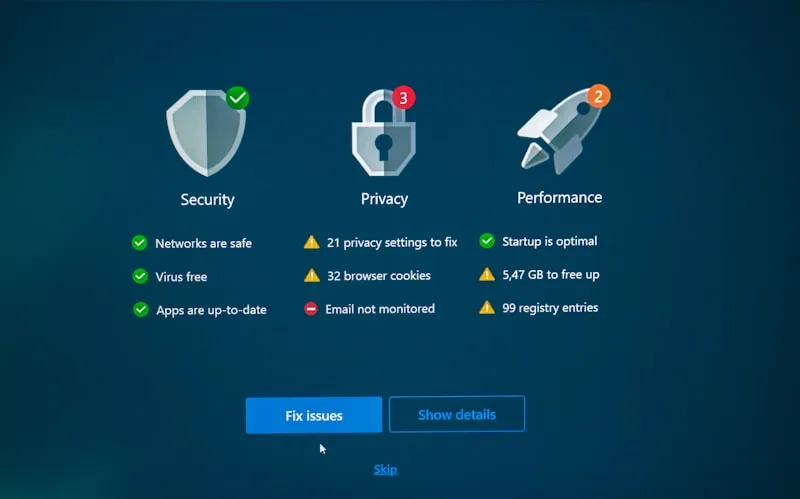

Most compliance failures with AI agents in healthcare do not come from sophisticated attacks — they come from deployment patterns that were never designed with GDPR in mind.

Failure Mode 1: No documented AI governance model The practice has deployed an AI agent but has no written policy describing what data it accesses, who owns it, and how decisions are reviewed. Under GDPR Article 5(2), the accountability principle requires that you can demonstrate compliance — undocumented deployments fail this test automatically.

Failure Mode 2: No DPIA before deployment The AI agent was onboarded quickly without a formal risk assessment. This is a standalone GDPR violation under Article 35, independent of whether any harm has occurred — and the Spanish Data Protection Agency (AEPD) has issued fines for exactly this procedural failure.

Failure Mode 3: Excessive data access for convenience The agent has been given broad database access to avoid configuration complexity. GDPR Article 5(1)(c) — the data minimisation principle — requires that access is limited to what is strictly necessary. Broad access is not a technical inconvenience to fix later; it is an ongoing violation.

Failure Mode 4: Cloud processing without architecture review Patient data is flowing through a cloud-based AI service without the practice having assessed where data is processed, under which jurisdiction, and whether Standard Contractual Clauses or other transfer mechanisms are in place. If data flows outside the EEA, GDPR Chapter V applies — and most practices cannot demonstrate compliance with this for their AI tool chains.

Failure Mode 5: No logging of AI-triggered actions The agent executes workflows, but no audit trail records which data was accessed, what decisions were made, and what outputs were produced. Without logs, you cannot respond to a data subject access request, investigate a breach, or demonstrate accountability to a supervisory authority.

What Compliant AI Agent Deployment Looks Like

Compliant AI agent deployment in healthcare is architecturally specific, not merely a matter of adding a privacy policy and ticking a consent box.

- Data mapping: Every data point the agent accesses is catalogued, with legal basis documented for each data type before the agent is activated.

- Architecture decision (on-prem / hybrid / controlled cloud): The processing location is a compliance decision, not just a cost decision — see our analysis of on-premise AI architecture for healthcare data sovereignty and hybrid AI architecture in healthcare.

- Access scoping: The agent’s permissions are defined to the minimum necessary for its specific function — not the broadest access that is convenient for the vendor.

- Logging and auditability: Every agent action is logged with sufficient detail to reconstruct what data was accessed, when, and what was produced — enabling data subject rights responses and breach investigations.

- Documented compliance reasoning: The DPIA, legal basis assessments, and governance decisions are written, dated, and version-controlled — not implied or assumed.

- Subprocessor audit: Every tool the agent calls is identified, assessed, and covered by a compliant Data Processing Agreement under Article 28.

For dental and specialist practices specifically, see our guide to compliant AI automation for dental clinics, which covers these deployment patterns in a sector-specific context.

Key Questions for Practice Owners Evaluating AI Agents

Do AI agents in healthcare require a DPIA under GDPR? Yes, in virtually all cases. GDPR Article 35 requires a Data Protection Impact Assessment when processing is likely to result in high risk to individuals. AI agents that access health records, process special category data, or make or support automated decisions affecting patients meet this threshold. The DPIA must be completed before the agent is deployed, not after. The Spanish Data Protection Agency (AEPD) actively enforces this requirement across healthcare businesses of all sizes.

What does GDPR Article 5(1)(f) mean for AI agents handling patient data? Article 5(1)(f) requires that patient data is processed with appropriate security — protecting integrity, confidentiality, and availability. For AI agents, this means that every component of the agent’s tool chain must be assessed for security controls, that access is authenticated and logged, and that the practice can demonstrate (under Article 5(2)) that these controls are actively maintained. A vendor’s security certification does not substitute for your own compliance demonstration.

How do I identify subprocessors in an AI agent workflow? Start with every API call the agent makes. Each external service receiving or processing personal data is a potential subprocessor. Request a sub-processor list from your AI vendor — this is your right under GDPR. Check whether each subprocessor has a signed Data Processing Agreement with your vendor, and whether your vendor notifies you of changes to their sub-processor chain. Many healthcare practices discover undisclosed sub-processors only after an incident; a pre-deployment audit prevents this.

Is autonomous AI processing of health data permitted under GDPR? It can be, but only with the correct legal basis under both Article 6 and Article 9(2), a completed DPIA, documented human oversight mechanisms where required under Article 22, and contractually governed subprocessors. Autonomous processing of health data without this framework is not a compliance gap to address in the future — it is a current violation. If your AI agent is already deployed without this documentation, the correct action is to pause the data processing and complete the compliance work before resuming.

What does the EU AI Act require for AI agents in healthcare? The EU AI Act classifies AI systems used in healthcare as high-risk under Annex III, which includes systems used in the management and operation of critical infrastructure and those used to assess the health or safety of natural persons. High-risk AI systems require conformity assessments, registration in the EU AI systems database, ongoing risk management systems, technical documentation, and transparency obligations to users. This sits on top of — not instead of — GDPR obligations. Practices deploying AI automation should assess their systems against both frameworks simultaneously.

The Bottom Line

The MIT AI Agent Index 2025 confirms what the GDPR anticipated: autonomous systems operating on sensitive data without adequate governance create compounding legal risk that grows with every automated action the agent takes unchecked. For independent healthcare practices in the EU, this is not a future problem — AI agents are being sold and deployed today, often without the compliance infrastructure that makes them legal to operate.

If your practice is evaluating or has already deployed AI automation, the first step is understanding exactly what data your systems are accessing, who processes it, and whether you can demonstrate compliance to the AEPD or your national supervisory authority. Start with our free Automation Roadmap to identify your exposure points, or explore MedCore Private AI — our on-premise solution built for healthcare GDPR compliance from the architecture up.

This article references publicly available research from the MIT AI Agent Index 2025. It is for informational purposes and does not constitute legal advice. Healthcare organisations should consult qualified legal and compliance professionals regarding their specific GDPR obligations.